Home > Blog > Product Feature Prioritization Done Right

Product Feature Prioritization Done Right

For many agile product teams, feature prioritization is the bane of their existence. Its demanding, grueling nature is rooted in a single point: prioritization is purely about decision making. There is no creativity involved, no self-challenges or exciting discoveries. Just a concentrated batch of decisions.

“OMG we have GOT to push that one to the TOP of the list!!” – this level of determination is saved for precious few features on the list. The truth is that most feature prioritizations need to be the result of a methodical process of evaluation and estimation.

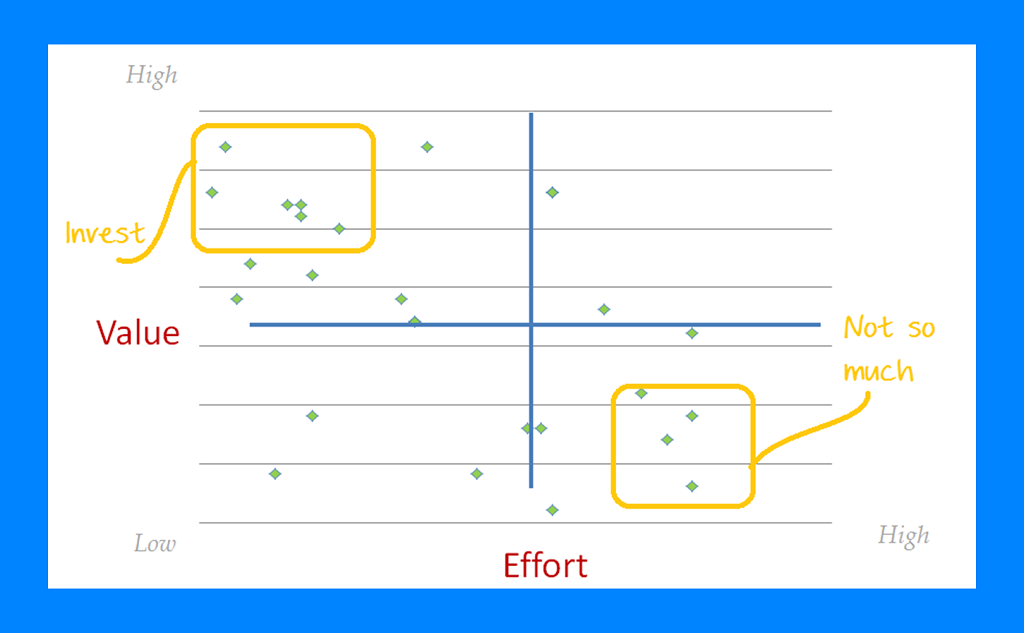

The prioritization process itself is composed of a calculation of two primary parameters:

How much will I have to invest in this feature?

Vs.

What will my profit from it be?

Decisions, decisions: How to make the right one

Resources, being – work hours, development cost, time and effort. Profit, however, refers not only to the bottom line income you may see from the feature, but also to the appeal it may have for potential users, possible upsell for existing features and various other benefits.

There are many ways to calculate the cost effectiveness of features in the pipeline. At Craft, we integrate 4 factors into the prioritization process:

- Story points

- Value – what will be it value to our users and to Craft

- Effort – how much resources, time and work hours will the feature cost us

- Importance –

Scott Mcbride at Intercom came up with a nifty model he calls RICE, where he integrates Reach, Impact, Confidence and Effort into a final score that helps his team decide. Tomer London at Gusto warns against comparing apples to oranges and offers a new framework for deciding between features.

Product Feature Prioritization Frameworks

In addition to RICE, there are many other useful product feature prioritization frameworks. Knowing these various frameworks is valuable for a Product Manager because different circumstances might call for different approaches to weighing competing features on your list.

For example, a simpler version of RICE is the Features by Importance framework. Product teams use this approach when they want a quick sense of which features on their backlog are likely to have the greatest impact on their market or the company’s bottom line. So, they simply order their features by defining each as either a Blocker (meaning a must-have feature the product can’t be delivered out), High Importance, Medium Importance, and Low Importance.

In the RICE framework, remember, your team will also analyze each feature’s effort level and the team’s confidence that they’re correct in their assessment of its potential value. That requires more thorough research and analysis than the Features by Importance framework.

Other frameworks include Weighted Shortest Job First (WSJF), which lets you determine the financial costs of developing — or not developing — specific features on your backlog, and the Kano model, which your team can use when you want to determine how much customer delight a given feature will create.

There are also many other product feature prioritization frameworks you can use, and your team will likely find one more appropriate than others under specific circumstances. But it’s worth pointing out that all the best frameworks have at least one thing in common. Each will guide your team’s efforts by giving you some type of product feature documentation template. With the Opportunity Scoring model, for example, you’ll start with columns for measuring Customer Importance and Customer Satisfaction. These data points are meant to quantify both your users’ feelings about how important each of your existing features are, and how satisfied they are with those features today. Features that score high on importance and low on satisfaction represent high-value opportunities to improve your product and increase customer loyalty.

Whichever of these prioritization frameworks you choose (and, in some cases, you might want to use more than one to score the same set of competing feature ideas), you’ll want to make sure your product feature roadmap includes your strategic reasoning for deciding to prioritize each one. This will help you communicate the importance and value of this work to your stakeholders across the company.

OK, this feature is Important. What does that mean?

As an attempt to understand why prioritization is so intimidating to product managers, I tried to analyze the process. The conclusion I came to is this: the part that makes everyone uneasy is not the decision which feature to develop first, but the evaluation of its value and effort-cost. When we are not given an explicit, unambiguous scale and tools to measure, we start to lose our footing.

Since value cannot be measured by inches or horsepower, we are left with a vague mechanism for grading intangible, immeasurable things like effort and value. In a sense, this is where the real prioritization takes place. Essentially, we find ourselves needing to establish a new scale which we can then use to quantify the seemingly unquantifiable.

How do we do that?

Grading Value

At Craft, we decided the smartest way to go about it is to use a cognitively processable ranking scale. Simply put – a scale from 1 to 10. It is a short scale, so users won’t be overwhelmed by numbers to choose from. It is also an intuitively familiar scale we use all the time for similar purposes (“on a scale of 1 to 10, how ugly is that sweater?” and so on).

We took special care, choosing this prioritization scale, to try to make life as simple as possible and take away the frustration from prioritization. The danger of overwhelming your team members with numeric choices is a grave one, because an overwhelmed team tries to avoid tasks, in which case we accomplished nothing. This grading scale is well within the comfort zone of most of our users, and the prioritization module has proved to be one of Craft’s great successes.

Grading effort

Grading the effort should be, well, a collective effort. Since various team members will be involved in developing each feature, make sure they weigh in on the amount of resources it will take. Eventually, when the team has had enough experience designing and executing a variety of features, the project managers should be versed enough in the process to grade effort by themselves.

This is why good task management is so important in product management software: you need to be able to measure the delta between time estimate and real time each feature takes. Time tracking and optimization is the fuel of improving the process. That said, it is important to relay to team that it’s not about hounding them and measuring each minute in their schedule, but about getting an accurate estimate of resources, for streamlining future sprints.

Measuring afterwards

It’s virtually impossible to measure whether the right features were prioritized at a certain point in the product’s lifecycle. “What if’s” are hardly productive and we can’t very well know what would have happened, had we gone ahead with a different set of features.

But we can measure rising popularity and get a sense of the product’s overall advancing success after each sprint:

- Collect feedback – get feedback from your users. If you didn’t ask them to participate in the prioritization process, ask them what they think about it now.

- Measure engagement with the new feature

- Social measuring – has your brand’s popularity gone up since the latest release? Is there more chatter about the product’s capabilities? Are you getting into more lists?

If you`re looking for tool to create Agile user persona check out craft.io – great online software for smart Product managers